Accessibility in Third level education – the scale of the problem

AHEAD do some amazing work within the third level sector to create inclusive environments in education and employment for people with disabilities but a recent report from them drove home the scale of the problem that we are facing in third level

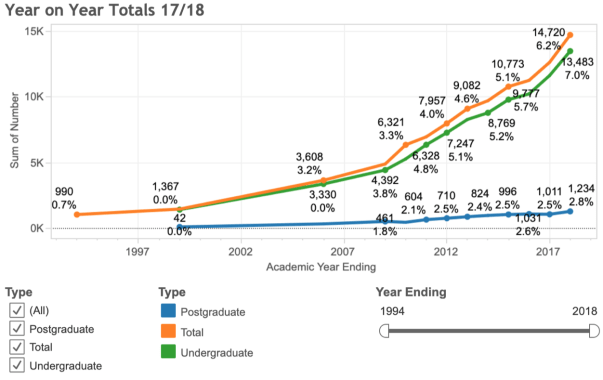

The most recent figures show 6.6% of the total student population in higher education registered with disability support services. That figure is brilliant yet alarming at the same time. It is brilliant because it represents a huge growth over the last 20 years. Before now those potential students would not get to higher education, they would struggle to complete second level and would be strongly discouraged about going on to third level even if they did get to complete second level. Having this level of growth in such a short space of time is a huge credit to the work that is going on at primary and second level, supporting those students and helping them achieve their potential through education. The knock on effect this support has on the families of those students is phenomenal and the importance of that cannot be overstatement. But the scary element of that 6.6% is that it only is a fraction of the real figure. The real figure is hidden because not all students choose to register with the services and some students may still not even realize that they a difficulty. For a variety of reasons they may not yet have received a diagnosis yet and as a result cannot avail of the services on offer. To give you a rough indication when AHEAD completed an anonymous survey with students 12% of respondents disclosed a disability.

Let’s break that down with some simple examples – most classes in higher education would have at least 50 in a class – that is 6 students with a disability in most classes. 300 students in a class is not unusual and that equates to 36 students. If a lecturer does not by default implement inclusive practices in their teaching they are excluding those students, at the very least they are putting them at a disadvantage.

Invisible disabilities

To make things a little more challenging not all disabilities are visible as a matter of fact very few are for a lecturer, particularly if they have large class sizes. As a result lecturers sometimes think – none of my students have a disability. Just based on the basic math’s outlined above there is somebody in every class that needs support.

My final point is in relation to the consequence of not having the support: – the most obvious consequence is students will struggle and potential fail their exams which can lead to drop in confidence and self esteem and an increase in pressure on the students. This can be more serious for people with a disability than those without a disability – looking at one particular disability Attention Deficit HyperActivity Disorder (ADHD) a study of nearly 22,000 Canadian adults found that the suicide rate for those with ADHD was nearly five times higher than those without ADHD.

So to conclude, in advance of the updated National Access Plan for education which is due for release in the coming days, the scale of the problem is much bigger than most people realize. It is not just the problem of the disability services in an institution – it is the problem of everyone in an institution.

Farewell (not goodbye) to DCU as I move to my next chapter

I’ve had an eclectic career to say the least and I’ve never been afraid of taking on something new.I started as a product development chemist in Proctor & Gamble in the 90’s, before working in a small elearning company providing elearning on 3.5 inch floppy disks, I then worked promoting science and doing PR for the pharmaceutical industry, from there I moved into lecturing chemistry before moving into the area now of staff development. I learned so much from each of those roles,I am who I am because of the experiences that I have had. Next Monday I start my next chapter working in Ernest & Young.

I learned so much from the people that I worked with. Each of the roles had one thing in common, the success that I achieved in each role was because of the people around me. You can have a wonderful strategy, loads of goals, mission statements and aspirations but as the saying goes culture eats strategy for breakfast and people make the culture, good people are the reason behind success

Everyday on campus I had a chat with Mary, the lady that cleaned the Bea Orpen building, a lovely lady; Declan in Helix, hundreds of people come in every week yet Declan knew that I drank peppermint tea and always put in a few ice cubes for me as a personal touch; Theresa in the canteen knew that I was a coeliac and at the various staff events that were on, she’d always give me a nod saying – “you can have that love”, or “that has wheat in it, I’ll be back in a minute with something for you” – right through to the former President of the University Prof Brian MaCraith who rang me when he found out that I was leaving. These personal touches made me feel like I belong in DCU.

Without a shadow of a doubt the best thing about DCU is the people. I’ve said it before DCU is like a big machine, like a car, there are bits that people see and bits they don’t, loads of moving parts underneath the bonnet that are needed to make it work – not many people see all these parts or even know what they do. But without these parts the car will not work properly. So my ask of you is to recognise your own importance , your own value and that of your colleagues because a spark plug may look small and insignificant when compared to the overall car but without it, the car will not work. So please bare with me for the next few minutes I want to celebrate the excellent work in DCU, the work that I’ve had the privilege to be involved in .

I had the privilege of running the Presidents Awards for Excellence in Teaching. When I first started we had 17 nominations. With the help of Madeleine Patton and the colleagues in the TEU we changed things around a little and for the last three years we had an average of more that 500 nominations. It is not that we have had more excellence, we’ve just had more celebration of excellence.

Declan Tuite and I were ahead of our time when in 2014, in partnership with his students we developed Augmented Reality (AR) teaching material. We went on to partner with Comms and Marketing and we embedded AR into the DCU brochures. Another example of being ahead of our time was our work with Kate Irving in Nursing with the development of the collaborative programme involving five other institutions from across Europe on the “Posadem” programme – students from DCU and these five institutions all logged into our VLE to do this amazing online programme helping to promote a positive approach to dementia – a superb example of innovation that also helps transform lives and societies. I also had the privilege of working with Finian Buckley & Co in the Business school to develop one of Enterprise Ireland’s most successful leadership programmes – GoGlobal. A superb collaboration between the TEU, the Business School and Enterprise Ireland to support the development of SME’s throughout Ireland.

I had the privilege of supporting the School of Biotechnology with rolling out an online module in Immunology, one of the first partnership initiatives with Arizona State university a strategic partner of the university. It is through this initiative that I managed to bring the amazing Clare Gormley into the team

Loop (local name for Moodle) – where do I start on Loop. When I first started Loop was on a server underneath a desk in our IT department and very few lecturers used it. Lecturers had to request to have a page created for their modules. The few staff that used it used to complain that it used to crash all the time. We’ve come a long way since then. Hardly anybody used it back then – last year we had 6 million visits to Loop . Rob and Motasem have done fantastic work in the last 18 months to bring Loop to the next level and I’m slightly jealous that I won’t be here to see the staff benefit from and embrace these new changes. With Henry and Salem providing support on the helpdesk I’ve no doubt that it will continue to go from strength to strength

Sticking with Loop I had the privilege of working with SS&D to roll out the first university wide online programme to support transitions in university. To my knowledge we remain the only institution to provide this programme as soon as students accepted their place in DCU.

There have been a lot of “I’s”, in this post, I did this and I had the privilege. But I can’t sit down without mentioning the biggest “I” word. Without a doubt the biggest project that I was involved in was the incorporation. The TEU were one of the first units to engage with incorporation. We provided Loop to all four institutions. We joined the T&L committee in St Pats with the wonderful Anita Prunty and started to support Teaching and Learning in whatever way we could. We worked with John Smith to bring over all of the staff and students onto Loop. We were a year ahead of everyone else. We now have to support 100’s more staff and 1000’s of additional students but the TEU got the better deal because we got to keep Suzanne Stone after incorporation was complete.

I have two professional highlights of my time in DCU, the first of which was being invited to give the keynote for SEDA annual conference in 2019.

For those of you that don’t know this is the professional body for people like me and my colleagues in the TEU. Getting such a keynote was huge. I was invited to talk about our work in Assessment and Academic Integrity. For our work in DCU to be recognised and held in such high esteem by this group was such an honour and is testament to all of the hard work my team have done in this area in the past few years. Spearheaded by Fiona O’Riordan the expertise, passion and enthusiasm that team bring to assessment unrivalled in my opinion.

The second career highlight was being awarded a Principal Fellowship of AdvanceHE. There are over 170,000 fellows worldwide but only roughly1500 of them are Principal Fellows. We have three of them in DCU, which speaks volumes about the standard of Teaching & Learning in the university, not forgetting the dozens of Fellows and Senior Fellows too.

I’m going to wrap up now by saying. I have been blessed with a wonderful team in the Teaching Enhancement Unit. When Covid hit we became a very popular unit. The team all stepped up to the mark giving 200%, all of the staff in DCU got a glimpse of what I see everyday, an amazing team. Supporting not only colleagues in every part of the university but graciously sharing their time and expertise with the entire sector. They are my work family, I cannot compliment them enough, they are unbelievable to work with.

We worked hard and we played hard – The TEU had our own band at one stage, with guitars, violins, even a harp. We spray painted walls with graffiti and I got to run my boss off the road while doing go kart racing. I also recorded a version of YMCA in Windmill lane studios. Somewhere there also is a video of me at a Christmas party attempting to do the floss with one of the students union sabbatical officers but the less said about that the better 🙂

I have never worked with a more cohesive, productive and supportive team and the biggest privilege that I had in DCU was to work with them.

Finally – They say if you enjoy your job you will never work a day in your life, well if that is true I never worked while I was here in DCU. I distinctly remember telling Prof Brian McCraith that I would buy shares in DCU if I could. I’m now leaving a place that I never thought that I would but I would still buy those shares if I could. As I say farewell to wonderful colleagues, DCU will always hold a special place in my heart. All that I ask of my former colleagues is that you please continue to do the amazing job of transforming lives and societies and regularly take the time to recognise the roll that everybody plays in “keeping the car moving”.

Assignment Planner – Increasing student success and being more inclusive at the same time

Recently I had the pleasure of presenting at the Ireland UK MoodleMoot to share news about a Moodle plugin that we have developed to promote inclusivity with respect to assignments. The recording below gives all of the details along with a quick demo but in a quick summary this plugin enables students to create a structured plan for completing the assignment.

I would really be interested to hear your views on the plugin and welcome suggestions for improvements.

Giving students choice of assignment to improve academic integrity

Following on from my last post where I listed the factors that comprise academic integrity – the pressure, the opportunity to cheat and the rationalisation that students may have to cheat; I suggest that we can reduce opportunity and rationalisation by giving students choice with their assessment. You can start off simple by using something like the Moodle Choice tool to allow them to choose their topic. In the video below the wonderful Mary Cooch explains how the “choice” tool works.

As Mary expertly explains there are numerous options open to staff when using the choice tool. Let’s just imagine that you have a class of 30 students, you can give them 5 topics to choose from. You can limit the number of students that can choose each option and you make the results anonymous. This means that students immediately have a limited number of colleagues that they can copy from as they are doing different topics. Furthermore they have an opportunity to choose their preferred topic which should increase “student ownership” which is a great approach to reduce any rationalisation that students may have to cheat. As an added bonus for the lecturer, you don’t have to correct 30 essays all on the same topic.

Another element of choice that you provide to students which will help reduce rationalisation to cheat is to allow students to choose their mode of assessment i.e. some can write an essay, others can record a podcast or do a video. Just as much as the previous example this increases student ownership. If you decide to combine the two suggestions together you reduce the chance of plagiarism even further because even if students A and B have the same topic they may choose different modes of delivery therefore it is harder to copy off one another.

Just as a word of warning though – too much choice can be a bit overwhelming to students so be careful to not go to the extreme and offer too much choice. What other ways do you think you could offer choice to students to help reduce rationalisation and opportunity to cheat?

Prevention is better than cure – when it comes to catching plagiarism

It is better to remove the opportunity to plagiarise rather than have an assignment that presents the opportunity for students to plagiarise

The keynote from the founder of Moodle, Martin Dougiamas at this years Ireland and UK Moodle Moot provided several examples how online services and products can be used to produce assignments that will not be detected by the standard text matching services that we have all grown to rely on. With these advances in technology and the ubiquitous access to information through the internet plagiarism and academic integrity is a huge concern for education institutions. We all need to work together to reduce the factors that comprise academic integrity – the pressure, the opportunity to cheat and the rationalisation that students may have to cheat. It is a multifaceted problem and therefore requires a multifaceted set of solutions. For the purpose of this blog post I’m going to concentrate on one of those solutions – the design of assessments.

While there have been tremendous advances in text matching software like Ouriginal and TurnItIn in recent years – it is really a case of “the horse has already bolted” to coin an old phrase. If the gate was never open in the first place the horse would never have escaped. If the assignment was designed with Academic Integrity in mind it makes it more difficult for the student to plagiarise. I’m not saying impossible , just more difficult. Remember assessment design is only part of the solution.

As part of an Erasmus project we have had the opportunity to work with partners in Georgia, Austria, Sweden and the UK to help academics design the opportunity for plagiarism out of assignments.

One of the outputs from this project was the “12 Principles of Academic Integrity” in relation to design of assessments.

- Set consistently high academic integrity standards which values university, programme and student/graduate reputation

- Provide detailed information direction on how students might avoid breaches of academic integrity and ensure consistency across a programme team

- Regularly update and edit assessments and programme assessment strategies.

- Use marking criteria and rubrics to reward positive behaviours associated with academic integrity;

- Design assessments that motivate and challenge students to do the work themselves;

- Ensure assessments are authentic, current and relevant;

- Adopt a scaffolded approach to assessments for learning with feedback points throughout the assessment process;

- Consider assessment briefs that have open-ended solutions or more than one solution

- Design in elements for students to record their individual pathways of thinking demonstrating students own work

- Design assessments which allow learners to prepare personalised assessments (either individually or group based)

- Build in a form of questioning or presentation/viva type defence component

- Co-design assessments or elements of assessment with students;

These principles are derived following a comprehensive review of the literature which focussed on two main questions:

- What approaches to assessment design are used to promote or maintain academic integrity?

- What recommendations are being made on using assessment design to support academic integrity?

Over the next few weeks I will share examples how an educator may through using a variety of learning technologies integrate these principles into their assessment design. For now I will leave you short interview with Professor Phil Newton. Phil is the Director of Learning and Teaching, having oversight of all taught programmes within the Swansea University Medical School. His research interest is in the area of Evidence-based Education, particularly Academic Integrity. I had the pleasure of interviewing Phil a while back and want to share his words of wisdom again.

Do course templates really work?

A few years ago we introduced a template for our Moodle course pages to help ensure that our courses meet minimum standards with regards to course design – specifically universal design for learning. We had numerous features within this template and we wanted to examine the courses to see how many courses actually used the templates; rolling out the template is not a problem as we can roll them out as adminstrators and we can also easily customise the template for each course category.

Once each of the course pages are created using these templates the lecturer needs to edit the content provided through the template. For example our template provides a picture and the staff member is encourage to swap this picture with a photo of themselves. Similarly we put in a generic email address (teacher@dcu.ie) and encourage a lecturer to replace it with their own email address. Through ad hoc visits to course pages we realised quite a few lecturers were not inserting their own pictures or email addresses. We have over 2400 course pages so manually checking every page is not practical. So with the help of Catalyst IT we developed a report to analyse all of the course pages. Because we have set up a category for each faculty and a sub category for each school in that category we can do reports on this basis. A sample of the report is visible in the screenshot below:

The next steps are to adopt the report to analyse additional parts of the template in the same way. Furthermore at the same time of enhancing the report we will provide CPD to staff to emphasise the importance of taking the time to swap/replace key parts of the template with information specific to their course pages.

For those interested here is the code that we used and as ever any suggestions for improvement would be very welcome :

SELECT

categories.name category,

courses.shortname ‘course (short)’,

CONCAT(‘‘, courses.fullname, ‘‘) course,

CASE

WHEN FROM_BASE64(configdata) LIKE ‘%teacher@dcu.ie%’ THEN ‘X’ ELSE ‘OK’

END email,

CASE

WHEN FROM_BASE64(configdata) LIKE ‘%Avatar|%20pixabay.png%’ ESCAPE ‘|’ THEN ‘X’ ELSE ‘OK’

END avatar,

CASE

WHEN pos.visible=0 THEN ‘HIDDEN’ ELSE ‘VISIBLE’

END visible,

teachers.teacher

FROM prefix_block_instances blocks

LEFT JOIN prefix_block_positions pos ON blocks.id=pos.blockinstanceid

LEFT JOIN prefix_context instances ON blocks.parentcontextid = instances.id

LEFT JOIN prefix_course courses ON instances.instanceid = courses.id

LEFT JOIN prefix_course_categories categories ON courses.category = categories.id

LEFT JOIN (

SELECT

GROUP_CONCAT(

DISTINCT(users.email)

SEPARATOR ‘, ‘

) teacher,

courses.id courseid

FROM prefix_role_assignments assign

JOIN prefix_role roles ON roles.id = assign.roleid

JOIN prefix_context context ON assign.contextid = context.id

JOIN prefix_course courses ON context.instanceid = courses.id

JOIN prefix_user users ON assign.userid = users.id

WHERE roles.shortname = ‘editingteacher’

AND context.contextlevel = 50

GROUP BY courseid

) teachers ON courses.id = teachers.courseid

WHERE blockname = ‘html’

%%FILTER_SUBCATEGORIES:categories.path%%

AND (

FROM_BASE64(configdata) LIKE ‘%Avatar|%20pixabay.png%’ ESCAPE ‘|’

OR FROM_BASE64(configdata) LIKE ‘%teacher@dcu.ie%’

)

ORDER BY categories.name, courses.fullname

#Moodle Board is now Available

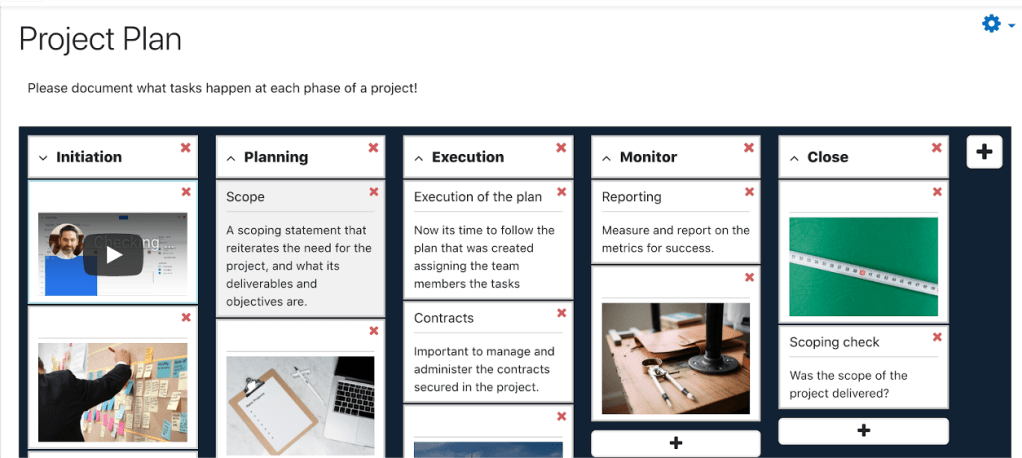

Earlier in the year there was a lot of interest in the plugin that we were building for Moodle that will provide the option for students and staff to contribute their posts on an “ideas board”, similar to commercial platforms such as Trello, Padlet and Lino where a student can add some text, link, image or YouTube video.

I’m delighted to share the news that Moodle Board is now available on the Brickfield Github and on the Moodle.org Plugin Database.

Following feedback received on the initial post we have added some key features to help improve the plugin. In addition to all of the features mentioned in the original post, we have added the ability to upload a background image, to allow students and staff to rate posts (and the posts can be sorted based on their ratings) and students can be restricted from adding to the board after a particular date.

I’d like to express huge thanks to both Athlone Institute of Technology (AIT) and University College London (UCL) for supporting the development of this plugin and Brickfield Education Labs for their stewardship of the development and maintenance of the project. This project is a great example of an inter institutional collaboration which created sustainable impact through open source development that will increase student engagement.

5 uses of #Moodle Board to engage students

- Introductions / Icebreakers

The most basic of them all – Ask your students to introduce themselves, provide a few lines of text, post a picture or a weblink to their portfolio page Something simple to get students engaged

- Muddy points / Exit tickets

There are a variety of names for this approach but regardless of what you call it, asking students to post a note at the end of the class can be very helpful. Whether you are asking them if they had any “muddy points” (aspects of the class that they do not fully understand) or whether just asking for general feedback. Engaging the students in this way can help improve the learning experience for the student as well as to help the lecturer reflect on their teaching.

Using the “restrict access” feature of moodle a teacher can set up numerous boards at once but release them when required.

- Crowdsourcing content

Post a link to a journal article and include a few lines of an introduction or post a youtube video or link to a website. Either way co-creating content relative to your module with your students is a very effective way to engage your students

- Zoom whiteboard

The Zoom whiteboard is a great tool when using breakout rooms. Students can use the whiteboards to share thoughts and reflections as a group. However sharing the whiteboard content with the rest of the class once the breakout rooms are over is not that easy. Now a teacher using Moodle Board can create a board for each breakout group. These boards can then be shared with the entire class or be kept private for each group using the standard Moodle features

- SWOC analysis

Strengths, weakness, opportunity and challenge analysis is a technique used to identify the external and internal factors that play a part in whether a business venture or project can reach its objectives. Whether students are doing a project individually or as a group a Moodle Boards can be used to create a SWOC analysis. Like the example above using the groups and grouping feature of Moodle allows the teacher to create a separate board for each group in one quick step by simply creating one Board activity and then choosing “separate groups” in the settings.

For more details on Moodle”board” please visit How to Add a post-it Board to your Moodle course

How to add a Post it Board to your Moodle course

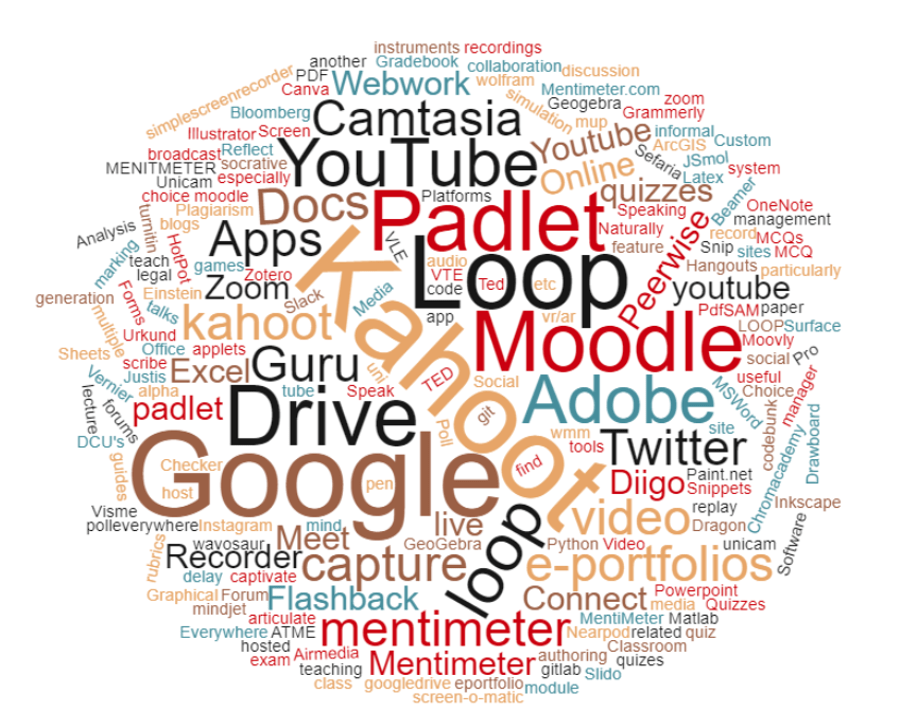

Student engagement has always been a popular subject and there are numerous methods to facilitate that engagement. For every method of engagement there are probably ten times as many tools and software packages available for people to use for engagement. Each tool claiming to be better the rest or offering an exclusive functionality when compared to its rivals. Recent responses from the from DCU staff to the National Index survey highlight that our staff use a wide array of these tools.

While each one of these tools may be brilliant, it is not practical to have a licence for every one and the free versions always come with a hidden price – either limited functionality or poor data protection (in the majority of cases both). So instead of choosing one specific platform and paying an annual licence we have decided to develop Moodle “Board”.

Board provides the capability for a student to post a “note on a board” anonymously, emulating a real life equivalent of writing a note on a “post-it” on a wall, or whiteboard.

Lecturers can categorise the posts by creating different “columns” on the board for students to place their note on. Board works just as well on mobiles as it does on laptops. Feedback from our staff focus group was very positive and I look forward to hearing from student focus in the coming weeks.

Comparison to commercial platforms

Similar to most platforms users of Moodle “board” can post text, links, videos and images. However because this is built in Moodle we have “restrict access”, “completion activity” and “grouping” capabilities which are available in a lot of core Moodle features but not available in these commercial platforms. As the board is an activity within a course, posts are restricted to students in that course as opposed to just having a url publicly available.

Furthermore while the post appears anonymous on the surface a lecturer has the ability to export the content to a CSV file and link the usernames to the “posts” within the CSV file.

We don’t have it perfect and have listed a few enhancements for future developments and would welcome further suggestions from you.

Features/Capabilities to be added after beta testing subject to funding:

- Ability for a teacher to reorganize the posts by dragging them from one column to another

- Ability to set activity completion e.g. student must add X amount of notes to the board

- Pin one or more posts by teacher – Pinned post goes to top.

- Ability to “star” a post

- Ability to reorganize posts based on “stars” (currently only available by date of posting)

- Have a date & time for “post by” which stops students adding entries

- Lock an individual column

- Ability to access the board and post to the board even if the user does not have a moodle account

Timeline

The plugin will be available for DCU staff in January 2021 and released to the Moodle community via the plugin database at the same time

Developed by:

Learning Technology Services, Brickfield Education Labs

Commissioned by:

Dublin City University as part of our wider approach to the pivot online following the Covid19 pandemic (additional funding was provided by the National Forum through the EASTDOL project, led by DCU)

Does an increase flexibility of our courses result in reduced accessibility?

In the last 20 years there has been a nearly six fold increase with students in higher education declaring disabilities, equating to over 14000 students in 2019. It is also worth noting that this number is just the students that declare a disability, I am confident that there are many more throughout the sector. For every “visible” disability e.g. someone in a wheelchair or a blind student there are many “invisible” disabilities such as dyslexia, autism and ADHD. Therefore as lecturers we will never know if we have students with disabilities in our class making the adoption of a “Universal Design for Learning” (UDL) approach more vital than ever. While we encourage lecturers to expand access and increase flexibility by moving towards a more blended provision of their courses – are they adhering to the principles of Universal Design. They are specialists in their respective disciplines and not necessarily web developers or accessibility experts. This post outlines how we assessed the accessibility of the course pages on our VLE (Moodle).

Figure 1: Number of students with disabilities in higher education

Last week I had the pleasure of presenting at the ALT (Association for Learning Technology) annual conference. I co-presented Gavin Henrick from Brickfield Education Labs describing the research conducted to evaluate the accessibility of course pages within our virtual learning environment. This presentation described how, using existing open source libraries, we built a reporting tool to define which checks were carried out, how they were carried out, how this data was stored and reported on at module, programme, and faculty level. As the report is available at these various levels, a lecturer can self evaluate their own course pages and staff developers can identify the training and support that may be needed across an entire faculty.

A subset of the Web Content Accessibility Guidelines was chosen for this study. These guidelines created by the World Wide Web consortium are a series of guidelines for improving web accessibility. Twelve separate modules within a programme were analysed for such checks as: are web links and images used on the courses accessible? Are headings within long passages of text used appropriately? The results while promising did highlight there is still room for improvement.

Figure 2 – Course checks per page

Figure 2 illustrates the results from one course in particular, illustrating that 5% of the images on this course have no alt text, 10% have issues with poor layout and 8% have poorly displayed links to other webpages. Figure 3 provides an alternative breakdown of the results illustrating what feature of the VLE is throwing up the most issues. For example we can clearly see on this particular course that the majority of the issues on this course are related to the Moodle “book”

Figure 3 – analysis of checks per moodle feature

These are just two of the reports that are available with several more available both at a course and a programme level. We look forward to providing an update on the next stage of this research at the World Conference for Online Learning in Dublin later this year.

References

World wide web consortium web accessibility initiative. 2008. Web Content Accessibility Guidelines (WCAG). [Online]. [2 April 2019]. Available from: https://www.w3.org/WAI/standards-guidelines/wcag/

Data on students in Irish Higher Education with Disabilities, 2018: Available from: https://www.ahead.ie/datacentre18-yearonyear